🔍 Discover

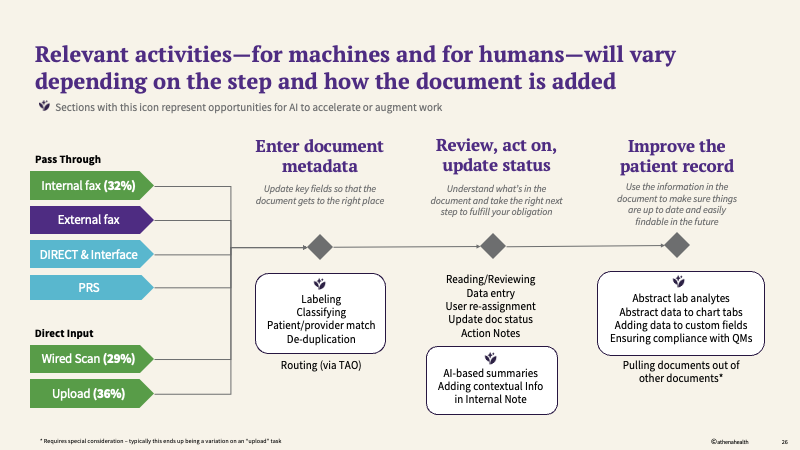

When I joined this initiative in late spring 2025, the team was focused heavily on improvements to our classification and labeling models, with the aim of reducing administrative burden on clients and our business processing office (BPO) while increasing accuracy. While this work was highly technical and crucial to success, leadership also saw the opportunity to determine where we could add value to the experience by:

- extracting more information out of documents that had been run through OCR to populate relevant areas of the experience

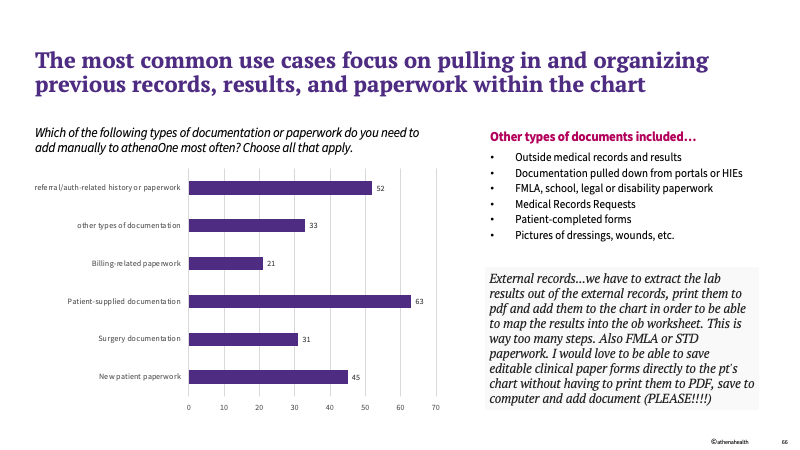

- improve how users add and organize documents within the chart manually - an experience that happens as often or more than faxing

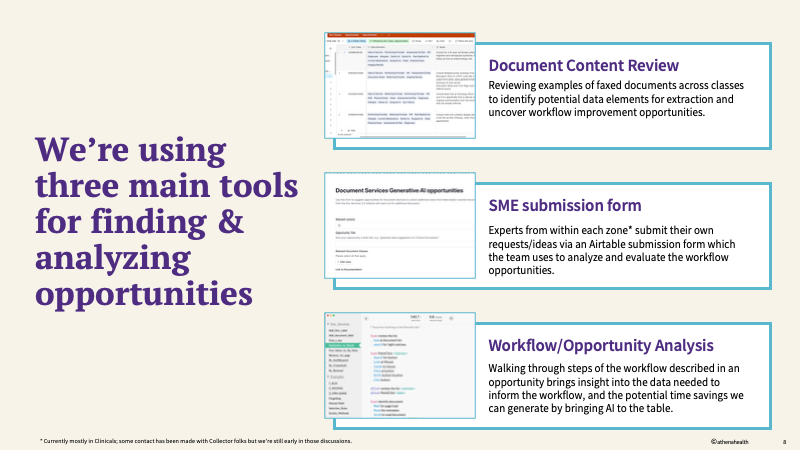

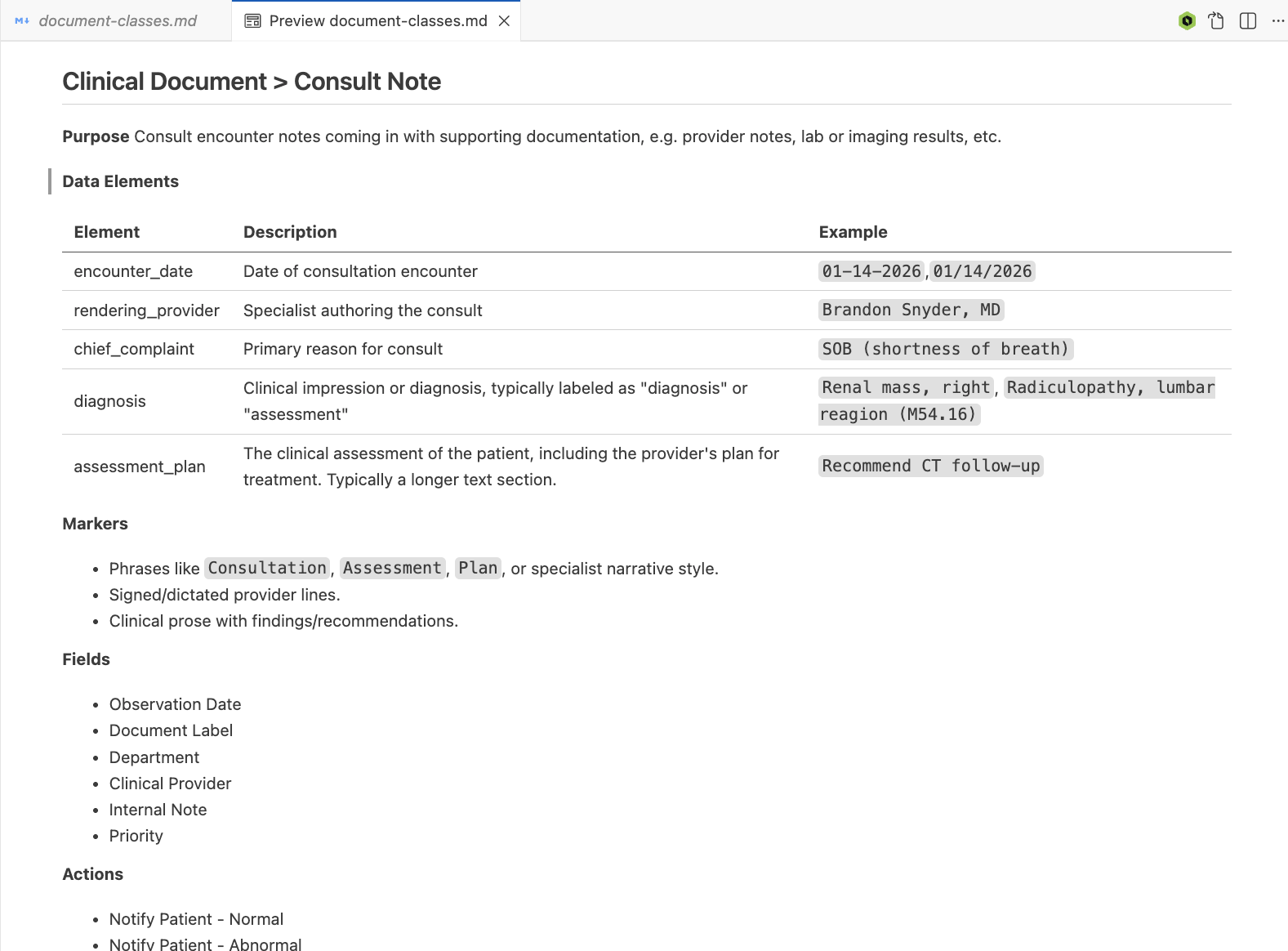

I started by digging into the documents themselves - by pulling examples from client contexts across various high-value document classes such as referrals, admission/discharge summaries, consult notes, and others. Using a custom Airtable database, I did an OOUX and content analysis exercise to uncover the type and meaning of content within each document class, along with what data elements we could potentially extract. This gave us a pool of opportunities to start considering as a team.

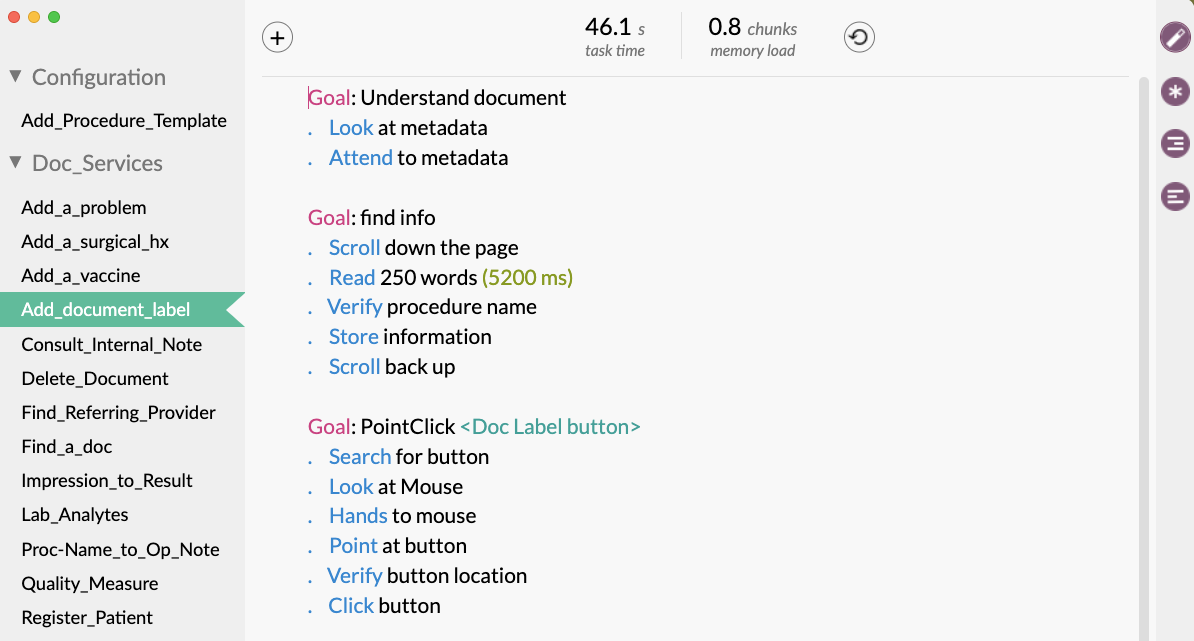

To help solidify which opportunities we should prioritize, I also used a tool called Cogulator to calculate the time it would take an experienced user to complete the workflow we were talking about automating in seconds. By calculating the seconds per document against the volume of documents received by fax or internal adding, we were able to demonstrate the potential time savings we could create for our users by pursuing each opportunity.

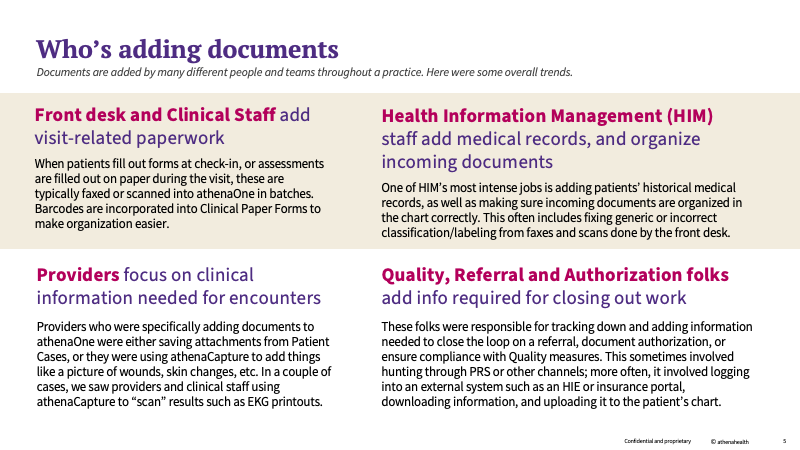

As the initiative continued, we recognized that incorporating manually added documents into our understanding was an important addition to our framework. Many of the documents that practices bring in manually either augment or replace information that’s brought in from outside - so many of the opportunities we were considering could be applied easily to both external and internally added documents.

To break down this problem space, we conducted:

- a series of Interviews with HIM and practice managers to determine how documents are added to the system manually, including what types of documents, different methods, etc.

- 3 site visits to see how HIM departments, front desk staff, and clinical staff get documents into the chart, and how they work with documents in the system

The outcome of that research was a clear understanding of who, how and why documents are added manually, along with a picture of how these documents could be incorporated into the opportunities we were considering for other types of documents.

📖 Define

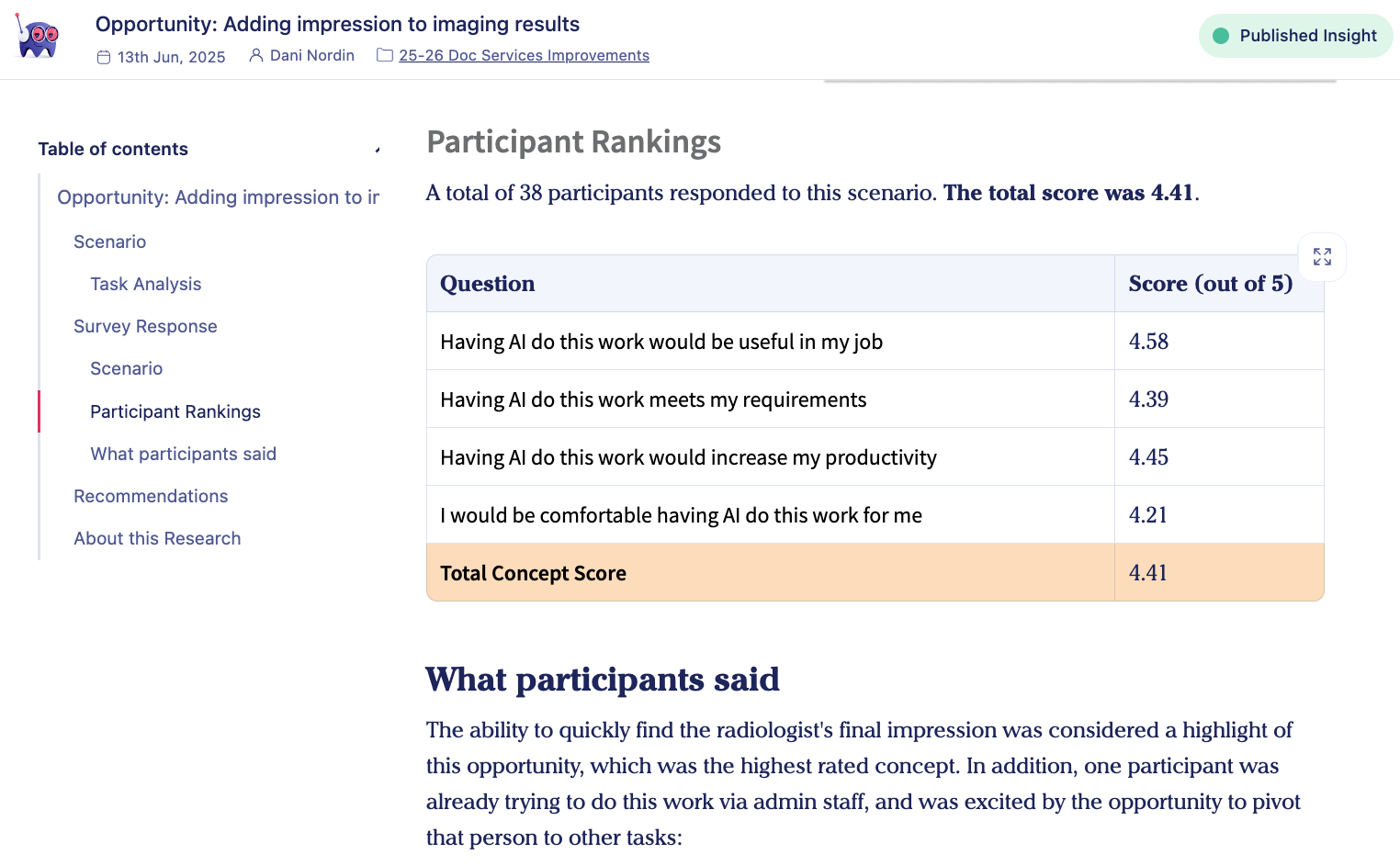

Throughout the project, we used surveys to capture feedback on the opportunities that seemed most interesting to us and recruit participants for later interviews and usability tests. Examples included:

- A concept description/rating study that showed participants 2-3 opportunities we were thinking about based on what they do in the EHR. For each opportunity, we asked them to rate each concept on its usefulness and ability to help improve their effectiveness and efficiency with these document types.

- A MaxDiff survey that asked participants to rank 10 opportunities (some currently in process, others being considered) in terms of their perceived value. This enabled us to both determine what to prioritize next, and to balance priorities under consideration against what we were already doing.

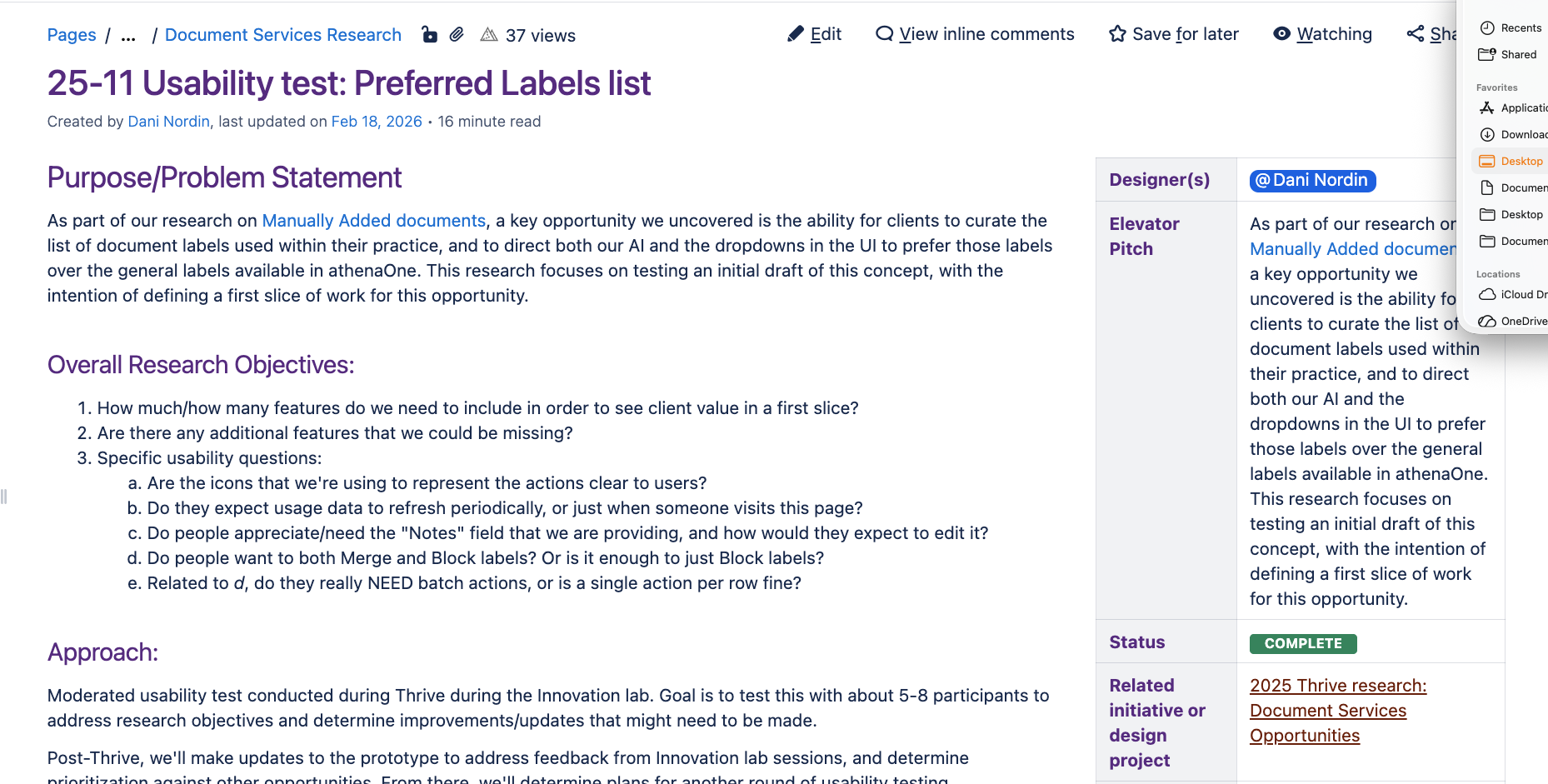

For two of the opportunities we uncovered which would require complete workflow overhauls, I also created a rough prototype of each proposed experience and conducted usability testing with 9 participants. In the tests, I was able to get feedback on both prototypes along with additional context on how each participant manages documents within their practice.

One of the other major opportunities we found in our research was a need for additional document sub-classes to represent the various types of documents clients were trying to build workflows around. For this, I created a document that covered the proposed classes and rationale. I also created a detailed Markdown file with information I had gathered from our previous research on the highest-value Clinical and Administrative Document classes.

📐 Align

One of the major challenges with this project was leading and influencing without formal authority. As we found opportunities across different document workflows - many of which were owned by other zones within Clinicals - how could we give them the information they needed to prioritize these opportunities on their roadmaps?

Additionally, we were getting consistent feedback from leadership suggesting new areas to explore with our research - for example, when we were asked to dig into manually added documents after a period of time focused on external fax. How can we approach this without feeling like we were pivoting away from a well-established path?

Working with the team, we chose an approach to progressively building up a knowledgebase rather than thinking of each new request as a strategic pivot. In addition to an evergreen deck that we used to present insights and progress to leadership and teams, I helped the team create a section in our internal Confluence wiki filled with research insights, opportunity briefs for high-value opportunities, and other artifacts that the team could draw from. This treasure trove of artifacts helped the team keep track of all that we were learning, even if they couldn’t prioritize work in the immediate term.

Dani, cannot say how lucky I am to work with someone like you on a daily basis. You have made a big difference in the speed at which we are ideating and conducting research. - Sandesh Aravind, product management

After each phase of research, we also met as a team to sketch out roadmap opportunities and determine how to approach the next phases of work.